The internet was built to share information, but today, it is just as effectively used to obscure it. For security professionals and information analysts, the challenge isn't just finding data - it is determining the intent behind that data. We are seeing a shift where digital platforms are weaponized to alter public perception, destabilize institutions, and sow confusion. This is where Open Source Intelligence (OSINT) becomes a defensive necessity.

At Osavul, we monitor these shifts daily. As we discussed in our look at Telegram OSINT tools as a new wave of online investigations, the platforms change, but the methodology of influence remains consistent. To fight back, analysts need to master OSINT for counter-manipulation - the systematic use of public data to identify, track, and neutralize coordinated inauthentic behavior.

What is OSINT on Disinformation?

When people ask, "What is OSINT?" the standard answer usually involves gathering publicly available data to answer intelligence requirements. However, when we apply this to the information war, the definition tightens.

OSINT on disinformation is not just about fact-checking a single image or debunking a specific claim. It is about analyzing the network that spread the claim. It involves mapping the ecosystem of bots, trolls, and coordinated state actors working in unison to amplify a narrative.

The goal is to move from reactive debunking to proactive threat intelligence. Instead of asking "Is this tweet true?", an analyst using OSINT on disinformation asks, "Who funded the creation of this narrative, and why is it trending specifically in this region at this time?"

The Structure of Influence: Understanding FIMI

To counter manipulation, one must understand the framework of Foreign Information Manipulation and Interference (FIMI). This isn't random noise; it is a strategic asset used by hostile actors.

The European Union External Action Service (EEAS) has done significant work in standardizing how we view these threats. Their resource on Information Integrity and Countering Foreign Information Manipulation and Interference is an excellent, helpful source for understanding the behavioral patterns of threat actors. They emphasize that FIMI is a pattern of behavior that threatens or negatively impacts values, procedures, and political processes.

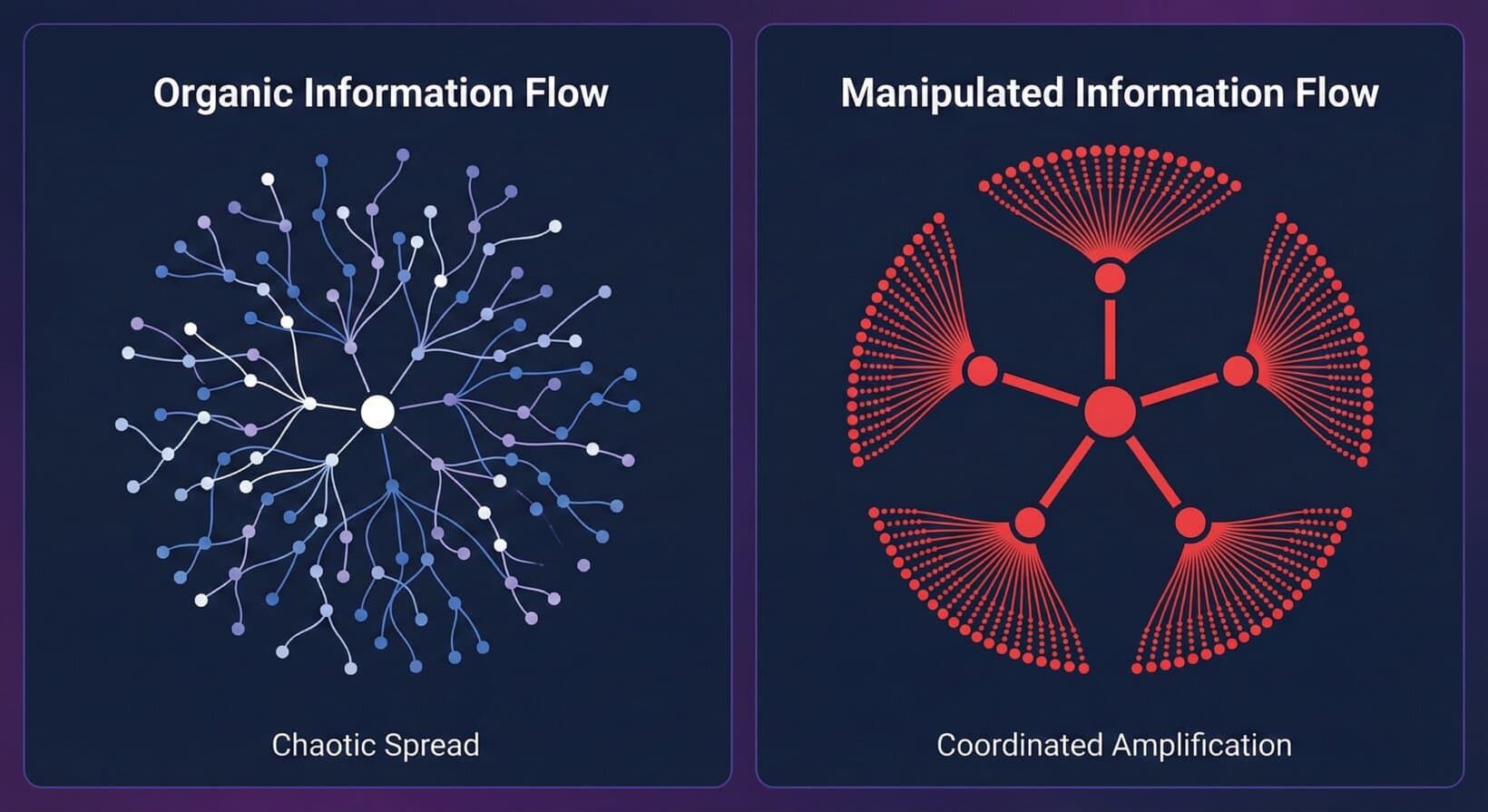

From an OSINT perspective, detecting FIMI requires looking for anomalies. Real news spreads organically; it moves from a patient zero to a wider audience in a chaotic, unpredictable pattern. Manipulated information spreads mechanically. It often hits high velocity immediately, pushed by accounts created on the same day, posting in the same syntax, at the exact same times.

How Do Analysts Trace the Source of a Narrative?

One of the most frequent questions we encounter is about attribution. How do you prove a campaign is coordinated?

The process begins with data collection across multiple platforms. Disinformation rarely stays on one site; it might originate on a fringe forum, get amplified by bots on X (formerly Twitter), and then be shared into closed groups on Telegram or WhatsApp.

Effective OSINT for counter-manipulation relies on cross-platform correlation. Analysts look for "co-occurrences." If five different "news" sites with opaque ownership publish the exact same article within three minutes of each other, that is a signal. If a thousand accounts share those links using the same hashtags, that is a signature.

We look for technical fingerprints:

- Metadata analysis: Do the images or videos share the same creation date or editing software unique to a specific group?

- Linguistic forensics: Are the posts using specific phrasing or grammatical errors common to a non-native speaker from a specific country?

- Temporal analysis: Are the accounts posting during the working hours of a specific time zone, regardless of where they claim to be located?

By applying OSINT on disinformation, we can strip away the disguise of "grassroots support" (astroturfing) and reveal the mechanical skeleton of the campaign.

How Can OSINT Help in Counter-Terrorism?

While political manipulation is a major focus, the stakes change when we look at violent extremism. The question of how can OSINT help in counter-terrorism? is critical for national security.

Terrorist organizations function similarly to marketing agencies. They need recruitment, brand awareness, and funding. They leave digital footprints just like any other entity.

OSINT allows investigators to map recruitment funnels. Analysts can track how a user moves from a public YouTube comment section to a semi-private Telegram channel, and finally to an encrypted chat where radicalization occurs. By monitoring public channels, intelligence agencies can identify the "beacons" - the accounts broadcasting propaganda - and map the network of followers who engage with them.

Furthermore, OSINT tools for counter-terrorism are essential for tracking financing. Even if cryptocurrencies are used, the entry and exit points often touch the clear web or public exchanges. correlating wallet addresses posted on social media with transaction ledgers can reveal the financial supply lines keeping a cell active.

Automation and the Speed of Response

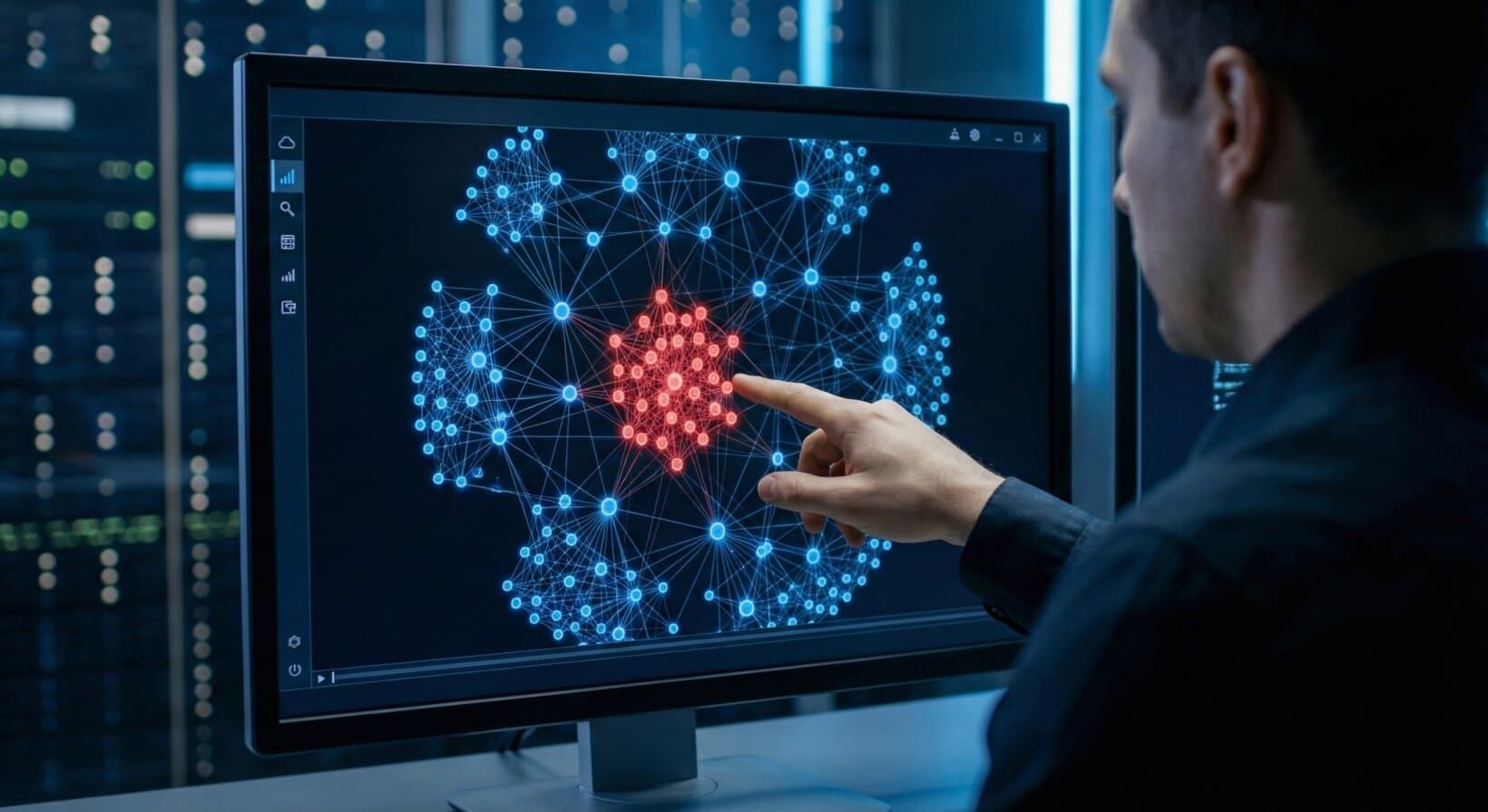

The sheer volume of data involved in OSINT on disinformation operations is too massive for manual processing alone. If a hostile state launches a bot attack involving 50,000 accounts, a human analyst cannot check them one by one.

This is where automated solutions come into play. Tools like our Nebula solution allow analysts to ingest massive datasets and visualize the connections instantly. Automation helps in:

- Sentiment Analysis: Quickly determining if the conversation around a topic is shifting negatively due to artificial injection.

- Bot Detection: Algorithmic identification of accounts that exhibit non-human behavior (posting frequency, lack of sleep patterns, network centrality).

- Network Graphing: Visualizing the "clusters" of accounts to see who the central nodes (leaders) are.

By automating the collection and sorting phases, analysts can focus on the assessment phase. OSINT for counter-manipulation is most effective when human intuition is paired with machine speed.

Common Challenges in Information Defense

A popular question in the industry is: "Can OSINT be used to manipulate?" The answer is yes. The same tools used to find the truth can be used to dox individuals or construct false narratives. That is why ethical guidelines and a neutral perspective are non-negotiable.

Another challenge is the "Splinternet." As the internet fragments into regional, regulated, or firewalled zones, gathering data becomes harder. OSINT on disinformation requires adaptability. If a threat actor moves to a new platform or a decentralized server (like Mastodon or a Fediverse instance), the analyst must follow.

We also face the issue of "cheapfakes" and deepfakes. While deepfakes get the headlines, cheapfakes (media that is simply mislabeled or taken out of context) are far more common. An old photo of a tank from 2015 might be reposted claiming to be from a conflict in 2024. Reverse image searching is the primary defense here, but it requires an analyst to be skeptical of everything they see.

Building Resilience

The ultimate goal of using OSINT on disinformation is not just to delete bots or block IPs. It is to build societal resilience. When we can show the public exactly how a manipulation campaign was constructed - showing the logs, the timestamps, and the coordinated networks - we reduce the campaign's power.

Disinformation works best in the dark. It relies on the target believing that the "outrage" they see online is real and widespread. By engaging in OSINT on disinformation, we turn the lights on. We provide the context that threat actors try so hard to remove.

For organizations protecting their brand, or governments protecting their democratic processes, OSINT for counter-manipulation is the shield. It provides the situational awareness needed to distinguish between a genuine crisis and a manufactured attack.

The digital space will likely remain a contested domain. However, with the right tools and a disciplined methodology, we can ensure that facts still have a fighting chance against fabrication.