How to Predict Risks

Predicting risks requires transitioning from analyzing past incidents to processing real-time data streams using machine learning models that identify anomalous patterns before they escalate. It is about anticipating vulnerabilities in cognitive security, supply chains, and market positioning before they register on a standard balance sheet. Executives must rethink threat intelligence as a live, continuous feed rather than a quarterly audit.

During routine technical audits of enterprise security architectures, the glaring gap is almost always latency. Teams find out about a narrative attack or a compromised network node hours - sometimes days - after the damage begins. The modern information environment moves too fast for that. A localized rumor can erode a brand's market cap globally within a single trading session. By integrating AI-driven OSINT and media forensics, you flip the operational posture from damage control to proactive defense.

Why Standard Deviation and Historic Data Aren't Enough

Relying solely on standard deviation and historic data fails because modern threat vectors - like coordinated botnet attacks and deepfake campaigns - do not follow traditional statistical distributions. They are asymmetrical, highly targeted, and rapidly evolving. Past performance cannot model a synthetic media smear campaign that did not exist twelve months ago.

A traditional risk management process looks backward. Analysts calculate the variance of past events and assume the future will look somewhat similar. Information warfare completely breaks that assumption. If an adversarial group launches a coordinated disinformation campaign against your company's upcoming product launch, historical sales data will not warn you. You need dynamic risk measurement that evaluates the real-time sentiment and velocity of digital narratives.

Tip 1: Deploy Risk Prediction Software for Real-Time Threat Landscape Monitoring

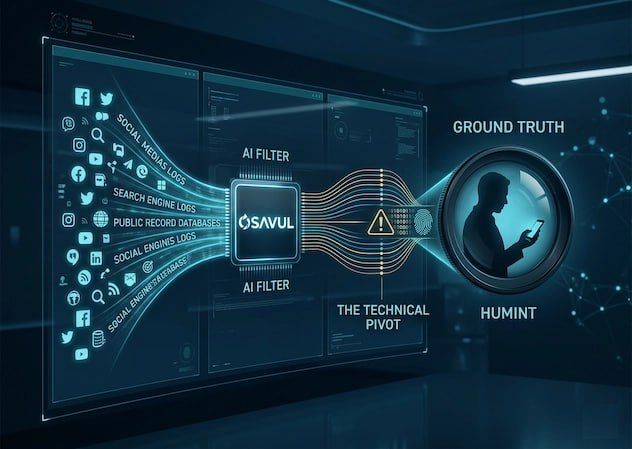

Risk prediction software operates by ingesting massive datasets - from social media chatter to dark web forums - and applying natural language processing to flag emerging threats instantly. This provides a continuous, automated radar for your organization's most critical assets. By mapping these signals against known vulnerability frameworks, security teams intercept threats at their inception.

The initial integration of these systems focuses on mapping your specific attack surface. Once calibrated, the software cross-references incoming data against known manipulation tactics. Instead of a security officer manually scanning news feeds, the system delivers an alert detailing the origin, spread rate, and potential impact of a hostile narrative. This allows executive decision-makers to implement countermeasures while the threat is still contained within fringe networks.

Assessing Risk in Business Environments Across Borders

Assessing risk in a business environment across global borders requires localized data ingestion that accounts for regional media ecosystems, distinct regulatory frameworks, and culturally specific disinformation tactics. A monolithic approach will generate false positives and miss nuanced, region-specific attacks. You must train models on the local dialect of the threat.

Case Context: Identifying Disinformation in the United States and Germany

The tactics used to destabilize a brand differ wildly by region. During our tracking of cross-border narrative attacks, we noticed distinct botnet coordination patterns based on the target audience. In the United States, threat actors frequently exploit hyper-partisan news cycles, using automated accounts to amplify wedge issues and associate corporate entities with polarizing topics to trigger consumer boycotts.

Conversely, in Germany, we observe more sophisticated, slower-burning media manipulation. Attacks here often target regulatory compliance, environmental records, or supply chain ethics, leveraging synthetic media to fabricate "leaked" documents. Using predictive AI, you catch the seed of the narrative - perhaps a sudden, anomalous spike in specific keyword mentions on regional Telegram channels - long before it breaches mainstream financial news.

Tip 2: Quantify Risk Using Advanced Machine Learning Models

To effectively quantify risk, organizations must replace subjective heat maps with algorithmic models that assign probabilistic weights to threat vectors using real-time data ingestion. This means calculating the exact likelihood of a vulnerability being exploited and multiplying it by the projected financial impact, updated by the millisecond. Guesswork is replaced by algorithmic certainty.

During our technical audits of enterprise security operations, we consistently see risk teams struggling to assign hard numbers to cognitive security threats. You can easily calculate the hourly cost of a downed AWS server, but pricing the impact of a coordinated botnet smear campaign requires a different engine. AI models bridge this gap by analyzing the velocity, reach, and sentiment of hostile narratives before they hit the mainstream.

How to Quantify Risk Without Relying on Static Spreadsheets

Static spreadsheets fail because they treat threats as isolated, static events. True risk measurement demands dynamic models that understand context and momentum. By feeding raw OSINT data into machine learning algorithms, you map out the interconnected, non-linear nature of modern threat environments, converting raw noise into actionable financial metrics.

We rely on a specific, multi-layered framework to move from qualitative guesses to quantitative certainty:

- Continuous Data Ingestion: Scraping fringe social platforms, dark web forums, and regional news to establish a baseline of normal brand chatter.

- Algorithmic Anomaly Detection: Flagging deviations from the baseline, such as a sudden, localized 400% spike in negative brand mentions.

- Probabilistic Impact Modeling: Running simulations to project potential revenue loss and reputational damage if the narrative breaches tier-one media outlets.

Tip 3: Automate Supply Chain and Investment Risk Detection

Automating supply chain and investment risk detection requires deploying AI agents to monitor massive, unstructured global datasets - shipping logs, geopolitical news feeds, and regional vendor filings - for anomalies that precede physical disruptions. This continuous scanning allows operations teams to reroute logistics or adjust portfolios before a localized issue becomes a global bottleneck.

When you evaluate how to analyze risk across global enterprise portfolios, the sheer volume of variables immediately outstrips human cognitive capacity. We routinely see investment firms caught off guard by secondary sanctions or sudden regulatory shifts simply because their analysts couldn't parse the subtle indicators buried in local, foreign-language media. For technical implementers building these internal systems, referencing established engineering frameworks on risk prediction provides a solid architectural foundation for algorithmic development.

Protecting Project Schedules in High-Stakes Hubs like Singapore and the UAE

High-stakes logistics hubs require absolute precision, as a bottleneck in a single port cascades rapidly through an enterprise project schedule. Traditional tracking relies on delayed official reports from port authorities, which means you are reacting to a delay that is already actively bleeding capital. Predictive systems cut through the reporting lag.

In our continuous monitoring of maritime and logistics chatter, AI models excel at flagging operational anomalies early. For example, tracking localized labor dispute keywords or sudden maritime rerouting discussions in key transit zones like Singapore or the United Arab Emirates (UAE). Catching these weak signals allows COOs to activate contingency plans days before the delay materializes on the corporate bottom line.

Tip 4: Implement Cognitive Security for Reputational Defense

Cognitive security focuses on protecting the information environment from narrative attacks that aim to manipulate public perception or erode stakeholder trust. To predict these risks, companies must move beyond traditional cybersecurity - which protects data - and adopt tools that protect the "logic" of their brand. This involves identifying coordinated inauthentic behavior and synthetic media before they reach a critical mass.

In our recent tracking of technical vulnerabilities in South Korea’s technology sector, we observed that narrative-based threats often precede financial fluctuations. Adversaries don't just hack a system; they "hack" the public’s belief in the system. By deploying Artificial Intelligence to monitor the velocity of specific keywords across cross-platform ecosystems, organizations identify the "patient zero" of a smear campaign and neutralize it before it triggers a sell-off.

Three Ways to Evaluate a Risk Factor in Information Warfare

Evaluating a risk factor within the context of information warfare requires a departure from standard impact-probability matrices. Instead, technical implementers must look at the structural integrity of the narrative itself. By quantifying these variables, you can determine which digital "smoke" is likely to turn into a corporate "fire."

We recommend focusing on these three ways to evaluate a risk factor for narrative measurement:

- Source Authority and Reach: Analyzing whether a narrative is originating from a high-authority botnet or a genuine grassroots movement to determine its long-term viability.

- Narrative Resonance: Using AI to measure how well a hostile story aligns with existing cultural or economic anxieties in a target demographic.

- Cross-Platform Velocity: Tracking how quickly a story jumps from an isolated forum into mainstream corporate news feeds.

Tip 5: Integrate Anomaly Detection into the Risk Management Process

Integrating anomaly detection into the risk management process ensures that strategic planning is based on live operational data rather than quarterly snapshots. This creates a "living" risk register that adjusts its priorities based on real-time deviations in market sentiment, network traffic, or supply chain logistics. It allows risk officers to stay ahead of algorithmic volatility that can disrupt business operations.

Effective risk management in the AI era requires a feedback loop where predictive models inform the strategic planning phase of every project management cycle. If the AI flags an anomaly in vendor behavior or a sudden shift in regional regulatory discussions, the team must have a protocol to pivot resources instantly. This agility separates resilient enterprises from those that are merely compliant.

How to Monitor Risk Continuously at the Enterprise Level

To monitor risk effectively, an organization must establish a unified security operations center that merges information security with business intelligence. This "single pane of glass" view allows for how to analyze risk across disparate departments - from HR to logistics - ensuring that no signal is lost in a departmental silo.

Building this infrastructure requires a heavy emphasis on data integrity during the technical audit phase. You must ensure that the AI tools you are using to predict risks are not themselves vulnerable to data poisoning. At Osavul, we emphasize that the most robust defense is one that combines machine-scale data processing with human-expert narrative analysis.

FAQs on AI-Driven Enterprise Risk Management

What types of business risks can AI most accurately predict?

AI most accurately predicts high-velocity, unstructured risks - such as coordinated narrative attacks, localized supply chain bottlenecks, and sudden shifts in market sentiment - before they breach mainstream awareness.

It excels where human cognitive capacity fails: processing millions of disparate data points in real-time. When executives ask how to predict risks in the information environment, we point to the velocity of unstructured data. During recent monitoring of corporate smear campaigns, we saw AI flag subtle botnet coordination days before the narrative impacted the target's stock price.

Can small businesses use AI for risk prediction?

Yes, small businesses can use AI for risk prediction by integrating scalable, cloud-based risk prediction software instead of attempting to build expensive, in-house machine learning models. This approach democratizes enterprise-grade intelligence, allowing learner teams to monitor localized threats. A mid-sized company doesn't need a massive security operations center to benefit from these tools. By automating the risk management process, a smaller operations team can monitor regional vendor reliability or regulatory chatter, safeguarding their project schedule effectively.

What happens if the AI makes a wrong prediction?

If an AI model generates a false positive or misses a critical signal, the resulting operational fallout must be mitigated by pre-established legal and compliance frameworks. You cannot outsource fiduciary responsibility to an algorithm. When you evaluate how to quantify risk in automated environments, the primary safeguard is the audit trail.

Regulators in strict jurisdictions like Germany or the United States require proof of due diligence. If your system flags a false investment risk that causes an operational shutdown, your compliance officers need documented technical audits proving the model's logic was sound and human-reviewed.

Conclusion: Securing the Narrative and the Bottom Line

Predictive intelligence is no longer optional. Waiting for a threat to materialize on a balance sheet means you have already lost the initiative. To survive modern information warfare and operational volatility, companies must measure risk at the algorithmic level.

The architecture of how to analyze risk has fundamentally changed. By embedding AI-driven anomaly detection and cognitive security protocols into your daily operations, you transform your threat posture from reactive to predictive. Whether you are safeguarding physical logistics or deploying advanced OSINT capabilities to neutralize a digital smear campaign, the objective remains the same: identify the spark before it burns down the enterprise.